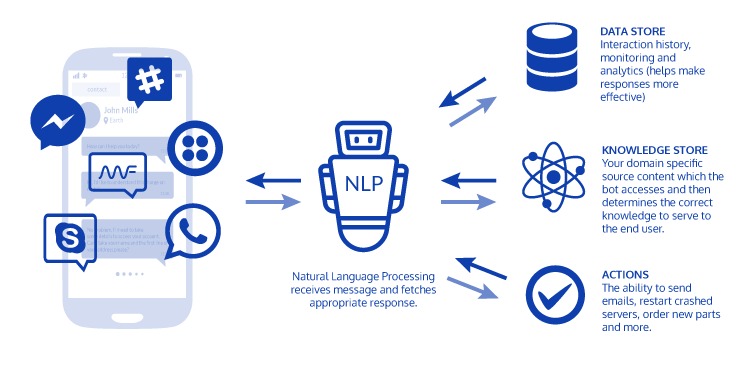

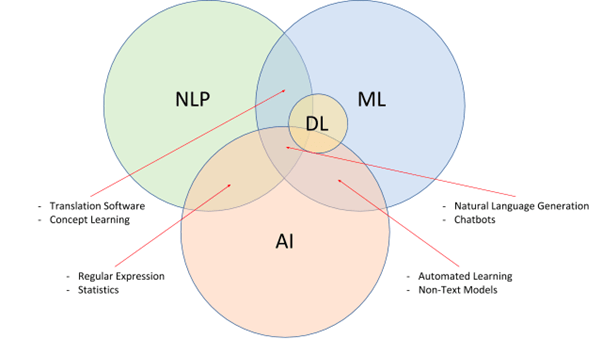

Artificial intelligence (AI) includes the field of Natural Language Processing, which focuses on enabling computers to understand, interpret, and generate human language. It plays a critical role in bridging the communication gap between humans and machines, opening up a variety of uses, including language translation, sentiment analysis, chatbots, and information extraction. NLP algorithms and models leverage linguistic principles, statistical methods, and machine learning techniques to process and analyse natural language data. In this technical blog, we will explore the fundamental concepts, techniques, applications, and challenges of NLP. We will also discuss recent advancements in NLP, including deep learning and transformer models, and their impact on the field. Additionally, we will delve into potential future developments and the ethical considerations associated with NLP.

Enroll for Artificial Intelligence Course in Chennai and start learning from basic about AI and its different applications.

Fundamentals of Natural Language Processing

The Fundamentals of Natural Language Processing (NLP) unveil the captivating world where computers and human language converge. This mesmerising field of artificial intelligence empowers machines to comprehend, analyze, and even generate natural language—the very essence of our communication.

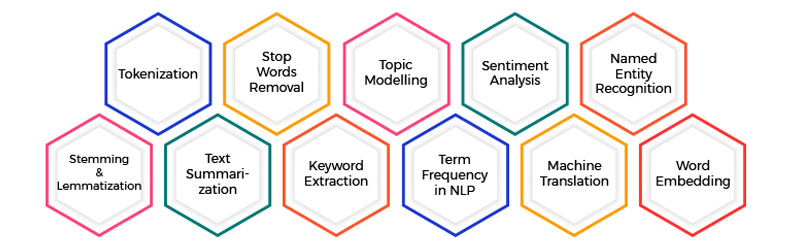

Imagine the first magical step, tokenization. It’s like sprinkling fairy dust, breaking down a text into tiny fragments called tokens. Each word, phrase, or punctuation mark becomes a treasure waiting to be discovered.

But the journey doesn’t end there. NLP employs linguistic superheroes known as part-of-speech taggers. They swiftly label each word with its grammatical role—nouns, verbs, adjectives—bringing order to the chaos of language.

Now, brace yourself for the grand spectacle of syntactic parsing! It’s like a linguistic acrobat analyzing the structure of sentences, unveiling the hidden connections and unravelling the mystery of grammar. These revelations help extract meaning and make sense of the linguistic tapestry.

But NLP’s powers don’t stop at grammar. With the gift of named entity recognition, machines can uncover the secret identities of people, organizations, locations, and dates hidden within the text. It’s like uncovering the hidden gems of information, empowering further analysis and knowledge representation.

And what about sentiment analysis? This enchanting technique enables machines to discern the emotional undercurrents in the text—joy, anger, love, or despair. It’s as if they have developed an empathetic sixth sense, enabling them to gauge the sentiment expressed by humans.

But the true magic lies in the art of machine learning. With a touch of wizardry, NLP models learn from vast amounts of labelled data, uncovering the intricate patterns of language. These models become like linguistic wizards, capable of tasks like translating languages, answering questions, or even conjuring up new sentences.

So, buckle up and embark on a thrilling adventure through the Fundamentals of Natural Language Processing. Witness the fusion of human language and cutting-edge technology as NLP unravels the secrets of our words, paving the way for remarkable applications in information retrieval, chatbots, sentiment analysis, and beyond.

Join FITA Academy for Artificial Intelligence Course in Bangalore and explore the new possibilities in AI with our up-to-date modules and experienced trainers.

Syntactic Analysis

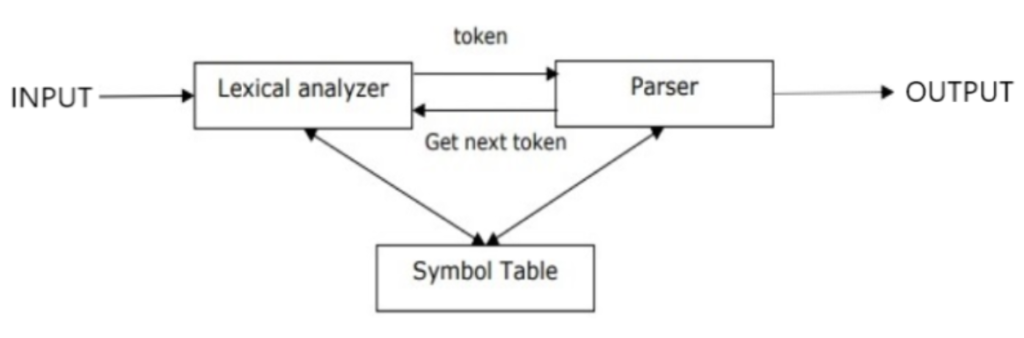

Syntactic analysis, a crucial component of The area of natural language processing (NLP), focuses on studying and analysing the grammatical structure of sentences. It aims to uncover the rules and relationships that govern how words combine to form meaningful phrases and sentences.

In NLP, syntactic analysis involves parsing a sentence to determine its syntactic structure. This process identifies the different parts of speech, such as nouns, verbs, adjectives, and their corresponding roles and relationships within the sentence. It helps machines understand how words relate to one another, unveiling the underlying grammatical framework.

The syntactic analysis employs various techniques and algorithms to achieve this feat. One commonly used method is constituency parsing, which breaks down a sentence into constituent phrases. It identifies noun phrases, verb phrases, and other structural elements, revealing the hierarchical structure of the sentence.

Another technique is dependency parsing, which focuses on the relationships between words by creating a directed graph called a dependency tree. Each word is represented as a node, and the edges between nodes indicate grammatical dependencies.

Syntactic analysis plays a vital role in many NLP applications. It enables tasks such as information extraction, question answering, machine translation, and text summarization. By understanding the grammatical structure of sentences, machines can extract relevant information, generate coherent responses, and provide accurate translations.

Professionals in NLP leverage syntactic analysis to build robust language models and develop advanced algorithms. They employ machine learning techniques, including deep learning architectures, to train models that can automatically parse and analyze sentences, achieving high accuracy and efficiency.

Semantic Analysis

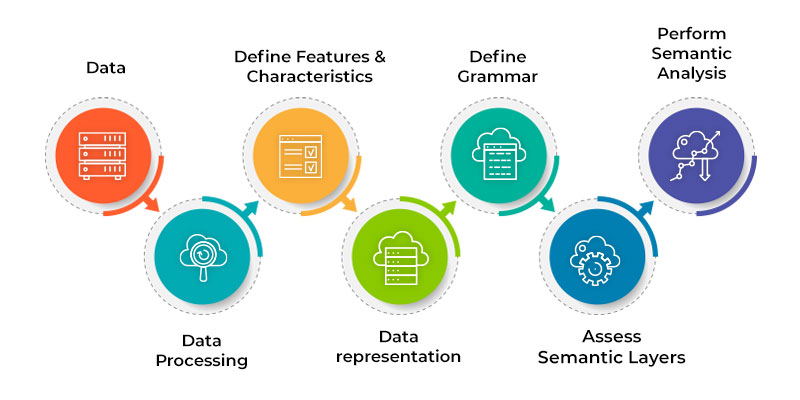

Semantic analysis, a vital component of Natural Language Processing (NLP), focuses on the interpretation and understanding of the meaning behind words, phrases, and sentences. It delves beyond the surface-level structure to comprehend the deeper semantics and context within human language.

In NLP, semantic analysis aims to bridge the gap between language and meaning. It involves extracting the intended meaning from text and representing it in a machine-readable format. This process enables machines to comprehend the underlying concepts, entities, and relationships conveyed by human language.

Semantic analysis employs various techniques to achieve this goal. One common approach is named entity recognition, which identifies and categorises named entities such as people, organisations, locations, and dates. This helps in extracting important information and understanding the context.

Another technique is sentiment analysis, which determines the emotional tone or sentiment expressed in text. By discerning positive, negative, or neutral sentiments, machines can understand the subjective aspects of language and infer user opinions.

The semantic analysis also encompasses word sense disambiguation, resolving the ambiguity that arises from words having multiple meanings. It aims to determine the intended sense of a word in a specific context, improving the accuracy of language understanding.

Professionals in NLP utilise semantic analysis to develop applications such as information retrieval, question answering, text summarisation, and chatbots. By understanding the meaning behind the text, machines can provide relevant search results, generate concise summaries, and engage in more meaningful conversations with users.

Machine learning techniques, including deep learning models, are often employed in semantic analysis to train models that can automatically extract and represent semantic information from text data. These models learn from vast amounts of labelled data, enabling them to make accurate predictions and understand the nuances of language semantics.

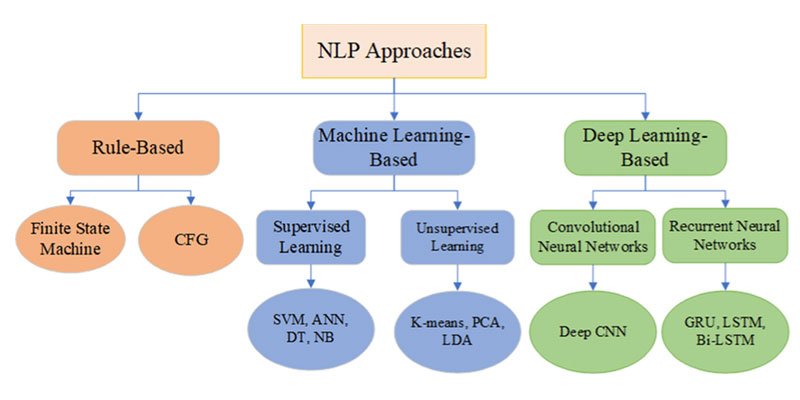

Machine Learning Approaches in NLP

Machine learning approaches in Natural Language Processing (NLP) revolutionize the way computers process and understand human language. These approaches leverage the power of algorithms and statistical models to automatically learn patterns and rules from large amounts of labelled data, enabling machines to perform complex language-related tasks.

In NLP, machine learning techniques are employed to create models capable of handling a variety of tasks. One common task is text classification, where machine learning algorithms learn to categorize text into predefined classes or categories. This is valuable for applications such as spam detection, sentiment analysis, or topic classification.

Another important application is named entity recognition, where machine learning models are trained to identify and categorize named entities such as people, organizations, and locations within the text. This enables information extraction and knowledge representation.

Machine translation, a challenging NLP task, heavily relies on machine learning approaches. Neural machine translation models, based on deep learning architectures, have made significant advancements in translating text between different languages, capturing semantic and syntactic nuances.

Machine learning approaches also play a vital role in language generation tasks such as text summarization, dialogue systems, and even chatbots. These models learn from vast amounts of training data to generate coherent and contextually appropriate responses or summaries.

To train these models, large annotated datasets are used, and various machine learning algorithms such as Support vector machines, decision trees, and deep learning architectures like recurrent neural networks (RNNs) and transformers are employed. These algorithms learn from the data, extracting relevant features and making predictions based on learned patterns.

The success of machine learning approaches in NLP heavily relies on the quality and diversity of the training data, as well as the design and optimization of the models. NLP professionals combine their expertise in both linguistics and NLP in machine learning to develop robust models that can handle the intricacies of human language.

Advanced NLP Techniques

Advanced Natural Language Processing (NLP) techniques push the boundaries of language understanding and processing, leveraging sophisticated methodologies to tackle complex linguistic challenges. These techniques go beyond the fundamentals and explore innovative approaches to extract deeper meaning from text and enhance NLP applications.

One prominent advanced technique is deep learning, specifically deep neural networks, which have revolutionized NLP. models like transformers and recurrent neural networks (RNNs) enable machines to capture long-range dependencies and contextual information, improving tasks like language translation, sentiment analysis, and text generation.

Another powerful technique is attention mechanisms. By assigning varying levels of importance to different parts of a sentence, attention mechanisms allow models to focus on relevant information during processing. This enhances tasks such as information extraction, summarization, and question-answering.

Advanced NLP techniques also delve into semantic representations, such as word embeddings and contextualized word representations. These techniques enable machines to capture the nuanced meaning of words and phrases in different contexts, enhancing language understanding and disambiguation.

Moreover, advanced techniques explore domain-specific language models, where models are trained on specialized datasets or adapted to specific domains. This enables better performance in tasks related to finance, healthcare, legal, or scientific domains by leveraging domain-specific knowledge and terminology.

Furthermore, multi-modal NLP techniques combine text with other modalities, such as images or audio, allowing machines to analyze and understand multi-modal data. This opens up possibilities for applications like image captioning, visual question answering, and audio transcription.

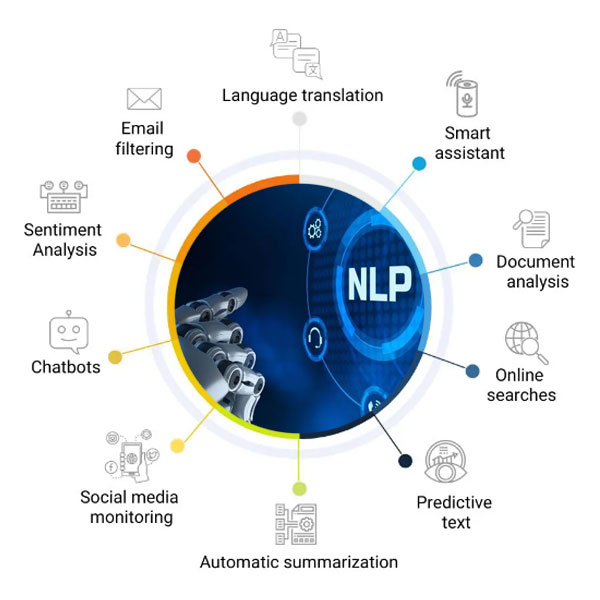

NLP Applications

NLP (Natural Language Processing) applications showcase the practical and diverse ways in which machines harness the power of language understanding and processing. These applications span various domains, providing valuable solutions and enhancing human-computer interactions.

One prominent application is information retrieval, where NLP techniques enable machines to analyze and understand vast amounts of text data. This facilitates efficient search engines, document clustering, and text categorization, helping users find relevant information quickly and accurately.

Another crucial application is sentiment analysis, which gauges the emotions and opinions expressed in text. This is invaluable for businesses in understanding customer feedback, monitoring social media sentiment, and conducting market research.

Machine translation, a widely recognized application, allows for automated translation between different languages. NLP techniques, especially with the advent of neural machine translation models, have greatly improved translation accuracy, enabling effective communication across language barriers.

Question-answering systems leverage NLP to comprehend and respond to user queries. These systems can provide concise answers or even extract relevant information from vast text sources, facilitating efficient access to knowledge and enhancing user experiences.

Text summarization is another application that condenses lengthy documents into shorter summaries while preserving the key information. This aids in information extraction, enabling users to grasp the essence of large amounts of text efficiently.

NLP applications also extend to chatbots and virtual assistants, enhancing human-computer interactions. Chatbots leverage language understanding and generation capabilities to engage in conversations, answer inquiries, and perform tasks like appointment scheduling or customer support.

Learn Machine Learning Course in Chennai from FITA Acadmey and upskill your self with new technologies and use of machine learning which will help you unlock new opportunities!

Challenges in NLP

NLP (Natural Language Processing) presents several challenges that researchers and practitioners in the field continually strive to overcome. These challenges arise from the inherent complexity and nuances of human language, as well as the evolving nature of linguistic expression.

One major challenge is language ambiguity. Words and phrases often have multiple meanings, and their interpretation depends heavily on the context. Resolving this ambiguity accurately is crucial for tasks such as machine translation, sentiment analysis, and question answering.

Another challenge lies in handling variations in language, including dialects, slang, and informal expressions. Models trained on standard language may struggle with understanding and generating text in these varied linguistic forms.

Additionally, the lack of labelled training data poses a significant challenge. Obtaining annotated data for specific NLP tasks can be time-consuming and expensive, especially in specialized domains or low-resource languages. This scarcity of data hampers the development and generalization of models.

Ethical challenges in NLP include biases present in training data, which can result in biased predictions or unfair treatment. Efforts to mitigate these biases and promote fairness, transparency, and accountability in NLP models and systems are ongoing.

The rapid evolution of language and the emergence of new words, phrases, and concepts pose continuous challenges. NLP systems need to adapt and stay up-to-date with linguistic changes to ensure accurate understanding and processing of contemporary language.

Cross-lingual and multilingual challenges involve effectively handling languages with varying structures, morphologies, and syntactic rules. Transferring knowledge and models from resource-rich languages to low-resource languages is a challenge that requires innovative techniques.

Join Machine Learning Course in Coimbatore and start learning from basic with experienced trainers.

Ethical Considerations

Ethical considerations in the field of Natural Language Processing (NLP) are paramount due to the potential impact of language technologies on individuals, communities, and society as a whole. As NLP systems become increasingly powerful, it is crucial to address and mitigate ethical concerns to ensure responsible and equitable deployment.

One key ethical consideration is bias in NLP models and data. Bias can arise from biased training data or biased algorithms, leading to unfair treatment or discriminatory outcomes. It is essential to carefully curate and evaluate training data, identify and mitigate biases, and promote fairness and inclusivity in NLP systems.

Privacy and data protection are critical ethical concerns. NLP often requires access to sensitive personal data, and it is essential to handle this data responsibly, ensuring proper consent, anonymization, and secure storage. Respecting user privacy and maintaining data confidentiality are fundamental ethical principles in NLP.

Transparency and explainability are also important. NLP models should provide clear explanations of their decision-making processes to build trust and enable users to understand and challenge the outcomes. This is especially relevant in applications such as automated decision-making and content recommendation systems.

Ethical considerations extend to issues of consent and user autonomy. NLP systems should respect user preferences and choices, providing mechanisms for users to control the collection, use, and sharing of their personal data. Informed consent and empowering users to make meaningful decisions regarding their data are ethical imperatives.

Furthermore, accountability and responsibility play a crucial role in NLP ethics. Developers and researchers should take responsibility for the impact of their systems, conduct thorough evaluations for potential risks and harms, and be prepared to address and rectify any unintended consequences.

Lastly, ethical considerations involve actively engaging with stakeholders, including users, impacted communities, and diverse voices, throughout the development and deployment of NLP systems. This ensures that the technology is inclusive, respects cultural norms, and addresses the specific needs and concerns of different communities.

Natural Language Processing (NLP) has made significant strides in enabling machines to understand and generate human language. By leveraging linguistic principles, statistical methods, and NLP analysis in machine learning techniques, NLP has facilitated a wide range of applications, from machine translation to sentiment analysis and chatbots. Recent advancements in deep learning and transformer models have further enhanced NLP capabilities. However, challenges such as ambiguity, data sparsity, and ethical considerations persist. By addressing these challenges and continuing research and development, nlp algorithm will continue to empower machines to understand and interact with human language, transforming how we communicate and interact with technology.